Home lab: Smart devices traffic analysis

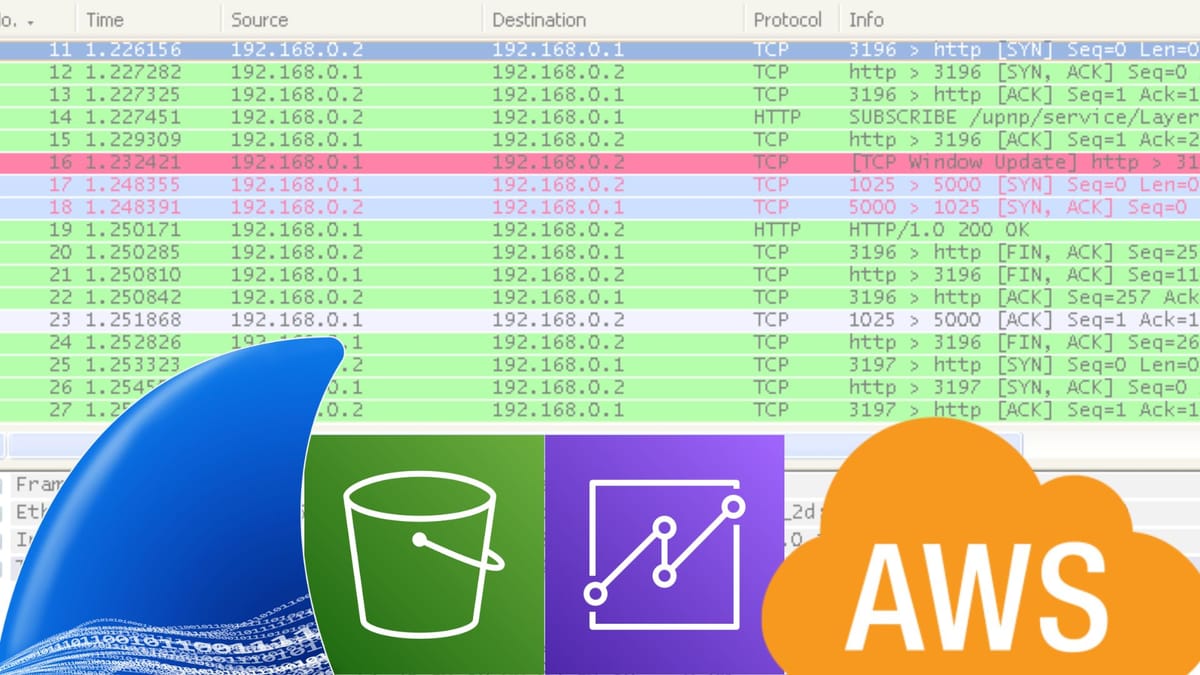

Over the past few weeks, I’ve been building a home lab project that combines network analysis and system design: a traffic analysis system for my smart devices at home.

In order to have something up and running as fast as possible, I approached this project in a simple way: I needed a machine capable of capturing traffic from the smart devices in my house and automatically sending that data to AWS storage for further analysis.

Part I: Setting up A Traffic Capture device

Having a power and fault-tolerant server at home made this step easier. I deployed an Arch Linux virtual machine using Hyper-V on my Windows server, and from there, I downloaded Wireshark and adjusted settings to only gather data from specific devices I was interested in, and ensured that data was already saved in CSV format, so the analysis process was easier from there.

Part II: AWS Setup

I needed a place to store my files, so I deployed an S3 bucket. Then I created an IAM user just for uploading CSV from the capture VM.

Going back to my VM, I installed and configured AWS CLI with the access key / secret key from the IAM user so the VM could talk to S3 with that limited IAM identity.

Now I came up with the following bash script to compress and upload traffic files to S3.

#!/usr/bin/env bash set -euo pipefail # ==== CONFIG ==== BUCKET_NAME="homelab-wireshark-bucket" CAPTURE_HOSTNAME="$(hostname)" BASE_DIR="/var/log/pcaps" RAW_DIR="${BASE_DIR}/raw" UPLOADED_DIR="${BASE_DIR}/uploaded" # Create dirs if they don't exist mkdir -p "$RAW_DIR" "$UPLOADED_DIR" # Current date components for S3 prefix YEAR=$(date +%Y) MONTH=$(date +%m) DAY=$(date +%d) # Find any .pcap files that are older than 2 minutes # to avoid racing with an ongoing capture. find "$RAW_DIR" -maxdepth 1 -type f -name "*.pcap" -mmin +2 | while read -r PCAP_FILE; do FILE_NAME="$(basename "$PCAP_FILE")" TIMESTAMP=$(date +%Y%m%d-%H%M%S) # Build S3 key: year/month/day/host/filename.pcap.gz S3_KEY="${YEAR}/${MONTH}/${DAY}/${CAPTURE_HOSTNAME}/${FILE_NAME%.pcap}-${TIMESTAMP}.pcap.gz" echo "Processing $PCAP_FILE -> s3://${BUCKET_NAME}/${S3_KEY}" # Compress to a temp file GZ_FILE="${PCAP_FILE}.gz" gzip -c "$PCAP_FILE" > "$GZ_FILE" # Upload to S3 aws s3 cp "$GZ_FILE" "s3://${BUCKET_NAME}/${S3_KEY}" # If upload succeeds, move originals to uploaded/ mv "$PCAP_FILE" "$UPLOADED_DIR/" mv "$GZ_FILE" "$UPLOADED_DIR/" echo "Uploaded and archived $FILE_NAME" done1) The script scans for .pcap files in raw/ that are older than 2 minutes,

2)Compresses to .gz,

3) Upload files to S3 under a date + hostname folder structure,

4) Moves the original and compressed file to upload/ as a local archive.

After successfully having this task functional, I had to schedule this script with cron so it could run every 10 minutes:

*/10 * * * * /usr/local/bin/upload_pcaps.sh >> /var/log/pcap_upload.log 2>&1(to be continued...)